UW Courses

During my summer internship at Zillow.com, I finished a web application that I started working on back in the spring. Initially, I was going to make a Facebook application that would replace the old Schedules app, where you could enter the courses you were taking. To this end, I made a scraper for the UW Time Schedule, and started working on the Model side of a Ruby on Rails app. Swept up by schoolwork, I soon ran out of motivation.

That quarter, I was taking a Statistics class aimed at Computer Science majors (UW Seattle: STAT 391). The final project was done in groups and was completely up to the students to define. My group, which included Alan Fineberg and Justin McOmie, decided to explore the relationship between UW instructor salaries and the student evaluations of their courses. The University collects student evaluations of almost every course at the end of the quarter, and stores data from the last three quarters at http://washington.edu/cec/. Salary data is available biannually for the past decade from what seems to be a FOIA request. Since three quarters of evaluations were not enough, we headed to the Internet Archive and dug up evaluations from 1998-2005. Unfortunately, in 2005 UW seems to have enabled an authentication gateway, shutting poor bots out.

Anyway, processing the data was a pretty fun bit of data mining. We had to do a substantial amount of cleanup to handle duplicate instructor and evaluation entries, and to join the data the way we wanted to. Although I won’t vouch for the soundness of our statistical foundations, we were unable to find any significant consistent relationship between instructor performance and salary. We looked at tenured and non-tenured instructors in many departments. Take the finding with a grain of salt, as there are a lot of things missing from a proper analysis: inclusion of research performance and grant money data, normalization of evaluations by course (some courses should be expected to rate lower, because they are harder), and data from 2005-2007.

I was responsible for scraping the evaluations, and I decided to make a web app that would present this new wealth of data in a useful way. Mainly, I wanted to enable searching, and present average ratings by course and instructor. Writing the application was a fun experience in learning Rails, and an exercise in test-driven development. Development was on slow simmer for a while, kicking up over the last few weeks of the summer (curiously, as I got busier at Zillow).

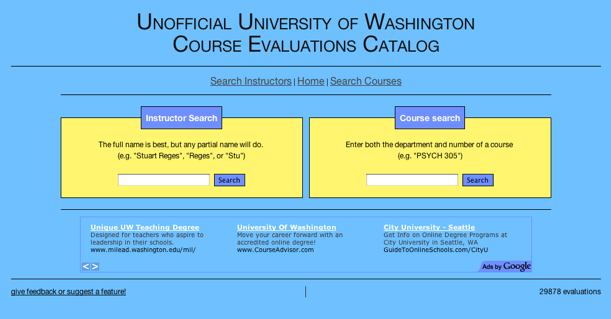

The result is accessible to all at UW Courses. I would be honored if you checked it out, and I hope that UW students find it useful. It contains about five times as many evaluations as are made accessible by UW, and provides a decent search interface. The source code for the whole application lives here at Github. Feel free to contribute.

Rails was a bit of a poor choice for this application, because the data it presents is really static. I feel that Rails really shines in terms of productivity and ease in dynamic, not static, data applications. Also, I got a good look at Rails performance issues, as the vast amount of data I was dealing with meant that I ran into “scaling” problems immediately. The site could be blazing fast with page caching (with memcached), but my hosting solution–256 Slicehost slice–lacks the memory.